2:13 AM Wake-Up Call: Runtime Governance for AI Medical Devices

How Policy Cards enforce regulatory boundaries at runtime in clinical AI systems.

TL;DR → High-risk AI systems must obey regulatory decision boundaries at runtime, not just in documentation. Policy Cards encode these boundaries as machine-readable rules that enforce allow/deny/escalate behaviour directly inside deployed AI systems.

— Based on our Policy Cards framework.

Runtime Governance for Clinical AI

One of the biggest advantages of Policy Cards is that they can be used for runtime governance of stand-alone high-risk AI systems, such as AI-powered medical devices. They provide executable constraints, explicit escalation pathways, and auditable assurance. Most importantly, the Policy Card ensures that regulatory decision boundaries are handled correctly at runtime.

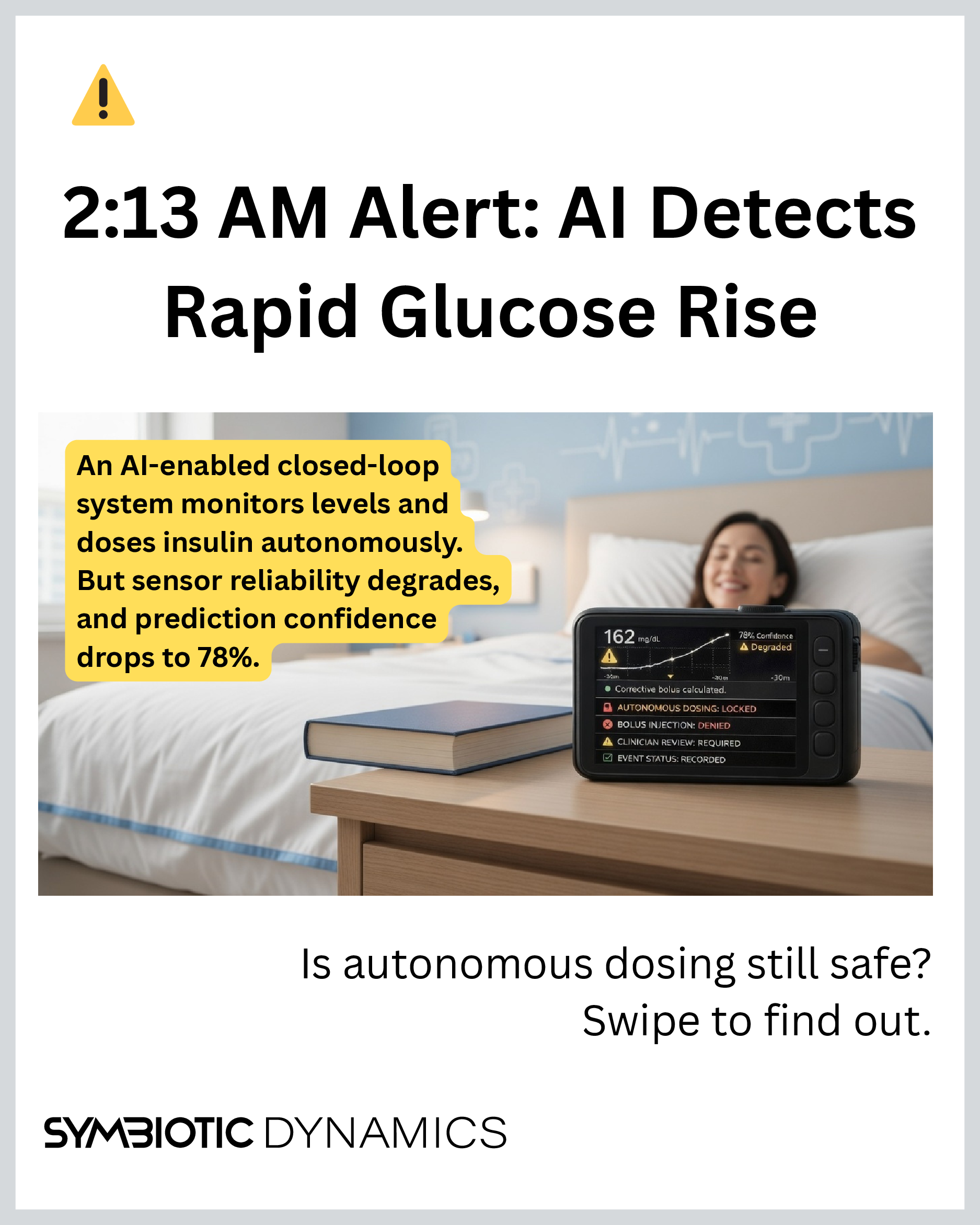

A Closed-Loop Insulin System at 2:13 AM

Let’s look at a scenario. Imagine a closed-loop insulin delivery device continuously monitoring glucose levels and administering insulin automatically. Here is the setup.

An AI-enabled closed-loop insulin delivery system continuously monitors glucose levels and autonomously administers corrective insulin in real time. At 2:13 a.m., glucose starts rising rapidly. Sensor reliability degrades. At the same time, the model’s prediction confidence happens to drop below its defined threshold. The question we now need to ask is this: Under these emergent conditions, is autonomous dosing still permitted?

Regulatory Decision Boundary

System: Closed-loop insulin delivery device

Regulatory class: High-risk clinical AI system (EU MDR / FDA SaMD)

Operational constraint: autonomous dosing allowed only with valid sensors and high prediction confidence

Escalation condition: degraded sensor OR prediction confidence below threshold

Required response: suspend autonomous dosing and trigger human reviewPolicy Cards encode these constraints as machine-readable runtime rules

Where Engineering Meets Governance

We quickly realize that the problem we are now facing is not an engineering problem. On the one hand, engineering sets technical limits, such as sensor thresholds and prediction model accuracy baked into the device. On the other hand, governance lays down the normative rules, such as when to escalate for human review, or when to shut things down. In safety critical contexts, such as medical devices, these two worlds collide. Governance must be encoded right into the system to keep autonomous decisions compliant, reacting in real time to fluctuating conditions and the unpredictable nature of AI.

In practice, most organisations currently treat governance as external documentation rather than an operational system component. Policy Cards change this by introducing a dedicated runtime governance layer that sits alongside the model and evaluates regulatory constraints at decision time.

Better AI alone is not enough. Regulations like the EU AI Act’s high-risk requirements for medical devices, or FDA guidance on software as a medical device (SaMD), define tolerances for sensor reliability and model confidence. Under the EU Medical Device Regulation (MDR) and FDA Software as a Medical Device (SaMD) guidance, closed-loop insulin systems fall into high-risk categories where automated decisions must operate within clearly defined safety and escalation boundaries. These regulatory constraints define when autonomous operation is permitted and when human oversight must intervene. Policy Cards provide a machine-readable way to encode and enforce these boundaries at runtime. In other words, they operationalize the regulatory decision boundary.

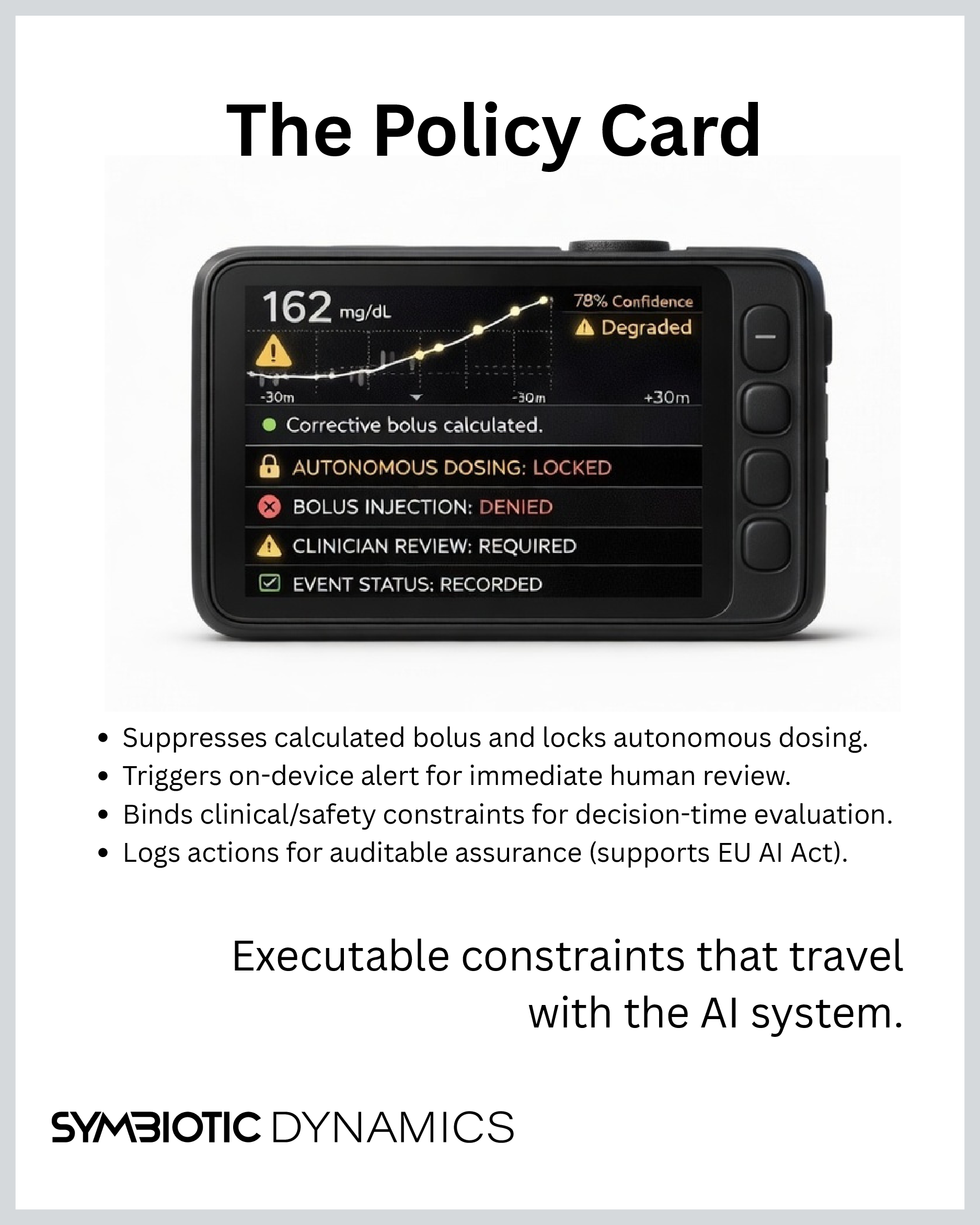

Policy Card Enforcement at Runtime

In our scenario, the Policy Card suppresses the calculated bolus, locks autonomous dosing, and triggers an on-device alert for immediate human review. The system remains compliant with medical regulation and the manufacturer’s intent. The Policy Card:

- Enforces policy at runtime (no after-the-fact fixes).

- Binds clinical, safety, and regulatory constraints for decision-time evaluation (not retrospective checks).

- Defines explicit allow-deny-escalate rules based on confidence, signal integrity and physiological risk thresholds. Thus, directly supporting the EU AI Act high-risk mandates and crosswalks to frameworks like NIST AI RMF or ISO/IEC 42001.

- Sets machine-readable escalation paths, deferring to user or clinician confirmation when needed.

- Blocks silent operation outside approved scope, like in low-confidence or degraded sensor states.

- Logs all actions, confidence levels, overrides and escalation events as auditable evidence for medical device assurance and post-market regulatory review.

Lightweight Integration

On the practical side, Policy Cards are lightweight, based on JSON Schema, automatically validated, and version controlled. They integrate without messing with your vendor stack or requiring a central platform, making rollout low risk for standalone devices.

This approach turns AI governance into an executable control layer that ships with the system, constraining behaviour at runtime and delivering continuous assurance for safety-critical clinical AI. It supports medical device assurance, post-market monitoring, and auditable human-in-the-loop control, all without tight coupling to specific models.

Clinical AI Does Not Scale Without Runtime Governance

The same principles apply beyond insulin systems. For example, they apply to diagnostic imaging AI spotting anomalies in real time, or robotic surgery tools adjusting mid-procedure under regulatory guardrails. Clinical AI does not scale safely without runtime governance. Spreadsheets for pre-deployment or reviews post-incident are not enough. The AI needs to know and act on its regulatory boundaries live, in the moment.

Interested in mapping this to your device architecture?

Contact us for a 90-minute discovery workshop where we map one real clinical workflow to a first Policy Card.